Hide overviewShow overview

A Live Disagreement

In April 2025, David Silver and Richard Sutton circulated Welcome to the Era of Experience, a preprint chapter arguing that the next major phase of artificial intelligence will not be driven mainly by larger piles of human-generated text. Their claim is sharper than "reinforcement learning is useful." They argue that future agents will acquire much of their capability from experience: data generated by acting in environments, observing consequences, and improving over time. Human data will still matter, but it will no longer be the dominant medium of progress. See Silver and Sutton, 2025.

That thesis quickly became more than a position paper. In April 2026, reporting on David Silver's London AI startup, Ineffable Intelligence, described a 1.1 billion USD seed round at a 5.1 billion USD valuation to pursue a "superlearner" agenda based on autonomous learning rather than continued dependence on human-written data. Treat the funding detail as a sign of institutional seriousness, not as evidence that the thesis is true.

The disagreement is now live. One side says scaling is not exhausted: the correct response to capability gaps is more compute, more data, better curation, longer context, better post-training, better tool use, and harder evaluations. Another side says that text and human preference feedback are running into limits because they are reports about the world rather than direct contact with the world. A third side says the bottleneck is not just the amount or kind of data, but the structure of the learner itself: architectures, inductive biases, world models, and representations that make some kinds of generalization possible and others difficult.

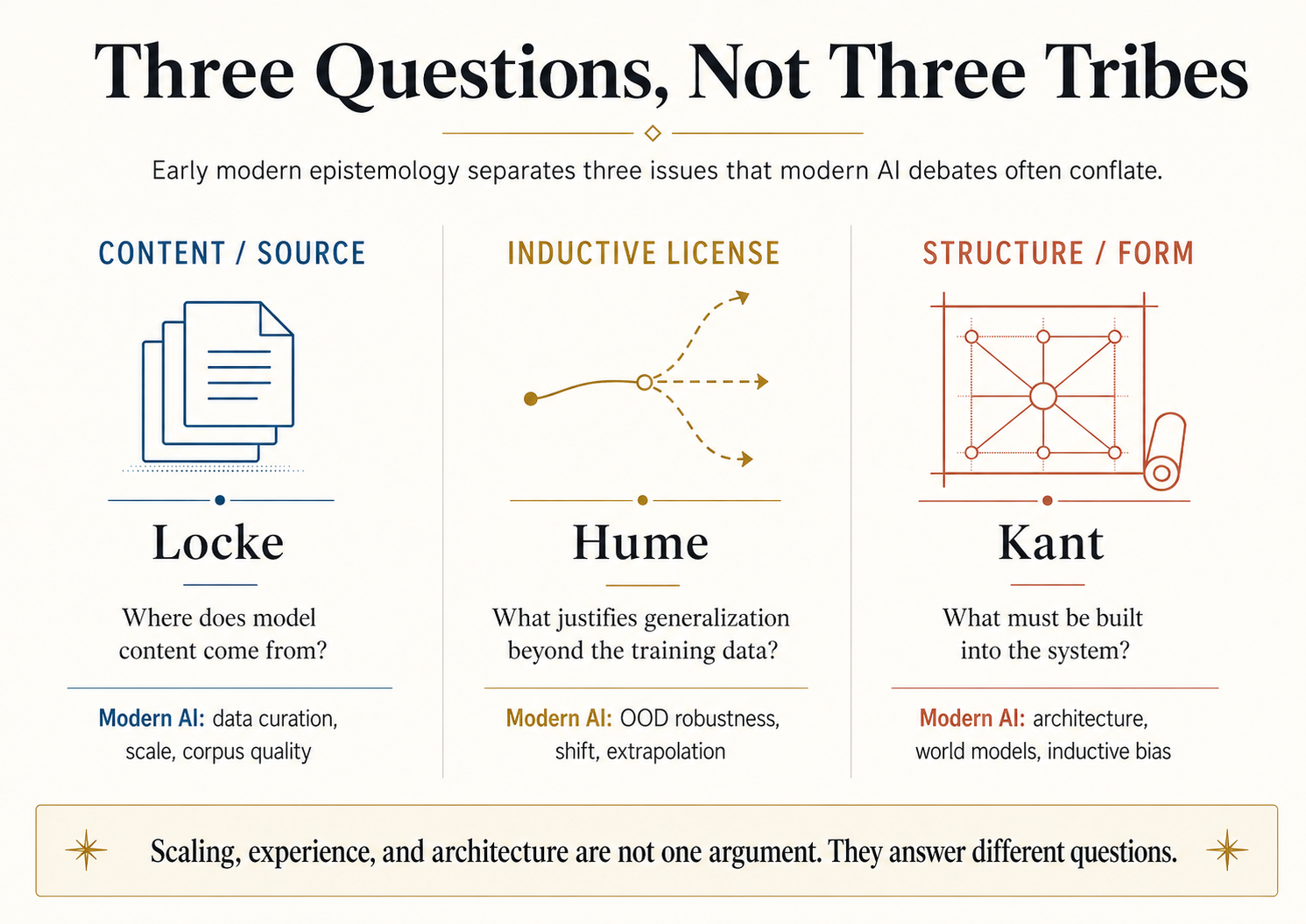

The useful philosophical point is not "Locke predicted LLMs" or "Kant invented world models." That is cheap and false. The useful point is that early modern epistemology separated three questions that the AI scaling debate often runs together:

| Question | Early modern anchor | Modern AI version |

|---|---|---|

| Where does content come from? | Locke | Training data, corpus quality, synthetic data, interaction data |

| What licenses inference beyond what has been observed? | Hume | Generalization, distribution shift, extrapolation, deployment risk |

| What structure must the learner bring to experience? | Kant | Architecture, inductive bias, representation, world models |

This essay uses Locke, Hume, and Kant as analytical lenses. The lens is useful only if it clarifies the modern disagreement without pretending the old philosophers were secretly debating transformers.

Locke, Hume, and Kant: Content, Induction, Structure

A reader who knows the seventeenth- and eighteenth-century canon can skim this section. The compressed version is enough for the AI argument.

John Locke published An Essay Concerning Human Understanding in 1689. His famous claim is that the mind begins as "white paper, void of all characters, without any ideas," and that the material of knowledge comes from experience. Locke divides experience into two streams: sensation, which provides ideas from the external world, and reflection, which provides ideas from the mind's own operations. He does not deny that the mind has faculties. He denies that it begins with innate content.

The Lockean question is therefore a content question: where did the ideas come from? In modern AI terms, this is the question of training data. What distribution supplied the model's examples? What was absent? What was overrepresented? What distortions entered through language, platform, curation, filtering, preference data, or synthetic generation?

David Hume takes the empiricist position to its sharpest edge. If all knowledge begins in experience, what justifies inference beyond experience? We observe past regularities and expect future regularities. But the next case has not yet been observed. No logical contradiction follows if the future diverges from the past. To say "induction has worked before, so it will work again" uses induction to justify induction.

The Humean question is therefore an inductive-license question: what authorizes the leap from observed cases to unobserved cases? In modern AI terms, this is not merely "test accuracy." It is the question of what a held-out score licenses when the deployment distribution changes. A model that performs well on examples drawn from the same source as training data may still fail when the task, tools, incentives, environment, or user population shifts.

Immanuel Kant accepts that knowledge needs sensory content, but denies that content alone can explain experience. His compact formula is: "Thoughts without content are empty, intuitions without concepts are blind." The empiricist half says that concepts without input are empty. The anti-empiricist half says that raw input without structure is not yet experience. The mind contributes form.

The Kantian question is therefore a structure question: what must be built into the system for experience to become knowledge? In modern AI terms, this is the question of architecture and inductive bias. A transformer, a convolutional net, a graph neural network, a diffusion model, and a model-based RL agent do not merely consume the same data differently. They make different hypotheses about what kinds of structure matter.

| Lens | Core issue | Modern pressure point | Failure mode if ignored |

|---|---|---|---|

| Locke | Content / source | What data produced the model's representations? | Treating text about the world as if it were the world itself |

| Hume | Induction / license | What justifies performance beyond the observed distribution? | Treating held-out IID success as deployment reliability |

| Kant | Structure / form | What does the learner impose before learning starts? | Pretending "data alone" exists independently of architecture |

That is the spine of the essay. Modern AI debates are not one argument. They are at least three.

The Modern AI Map

The first modern family is the scaling family. It says that general methods using computation and large data tend to win over hand-engineered structure. This is the spirit of Sutton's bitter lesson: over the long run, methods that scale with computation have repeatedly beaten methods that try to encode too much human domain knowledge by hand. See Sutton, 2019.

This position has strong empirical backing in language modelling. Kaplan et al. showed smooth empirical scaling laws for neural language model cross-entropy loss as a function of model size, dataset size, and compute. Hoffmann et al. then revised the compute-optimal prescription in the Chinchilla paper, arguing that many large models had been undertrained relative to available compute and that model size and training tokens should be scaled together. See Kaplan et al., 2020 and Hoffmann et al., 2022.

The second modern family is the experience family. It says that human-generated text is a limited medium. Text contains reports, abstractions, explanations, code, arguments, stories, and records of experiments. It does not contain the full stream of action and consequence by which agents learn to control environments. The Silver / Sutton thesis is empiricist in spirit, but not in the weak sense of "more internet text." It is empiricist in the stronger sense: let the agent encounter the world, act, receive feedback, and improve from that feedback.

The third modern family is the architecture family. It says that neither text scaling nor interaction data is enough unless the system has the right form. LeCun's JEPA and world-model program is a prominent version: intelligent systems need representations that support prediction, planning, abstraction, and reasoning under uncertainty. See LeCun, 2022. This is not literally Kant. But it has a Kantian shape: the system must bring structure to experience, not merely absorb data.

Hide overviewShow overview

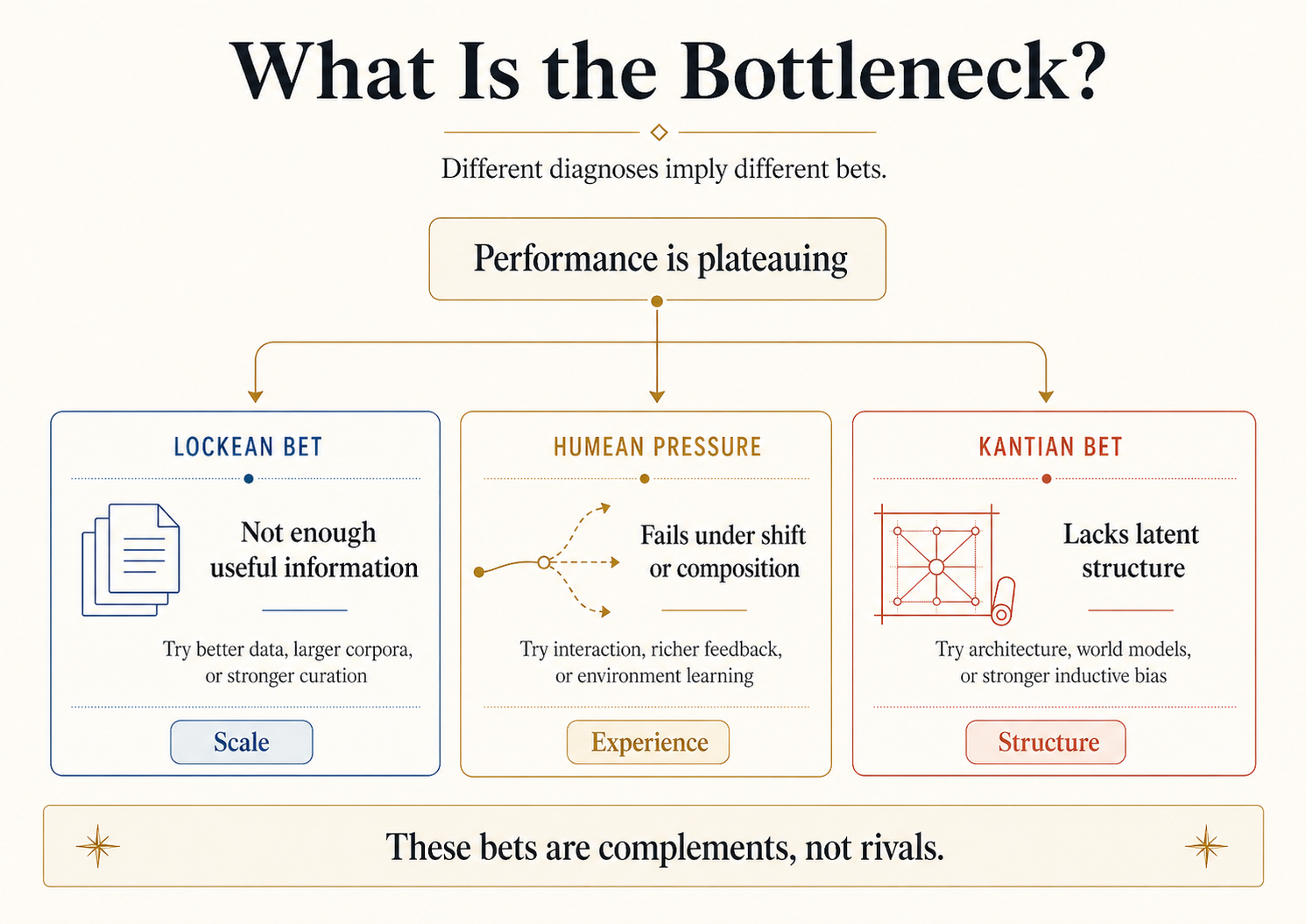

The real disagreement is not whether data, experience, and architecture matter. They all matter. The disagreement is which one is currently doing the limiting.

If the bottleneck is content, improve data: curation, coverage, multimodality, synthetic pipelines, domain-specific corpora, and quality control. If the bottleneck is inductive license, improve evaluation and feedback: OOD benchmarks, environment interaction, long-horizon tasks, calibration, uncertainty, and deployment monitoring. If the bottleneck is structure, improve the learner: architectures, memory, world models, tool interfaces, causal representations, and inductive biases.

Those are different bets. Confusing them produces bad arguments and wasted engineering effort.

Where Scaling Evidence Stops

Hide overviewShow overview

The clean empiricist claim is: more data produces better generalization. That sentence is too vague to be useful. It hides at least three different meanings of generalization.

| Kind of generalization | Meaning | Typical evidence | Main danger |

|---|---|---|---|

| In-distribution | Good performance on held-out examples drawn from the same source as training | Scaling laws, validation loss, benchmark scores | Mistaking IID success for real-world reliability |

| Out-of-distribution | Good performance after the input distribution shifts | Robustness tests, new benchmark versions, deployment studies | Assuming the future resembles the training distribution |

| Compositional / systematic | Good performance on novel combinations of familiar elements | Synthetic tasks, reasoning suites, programmatic evals | Memorizing patterns without learning the rule-like structure |

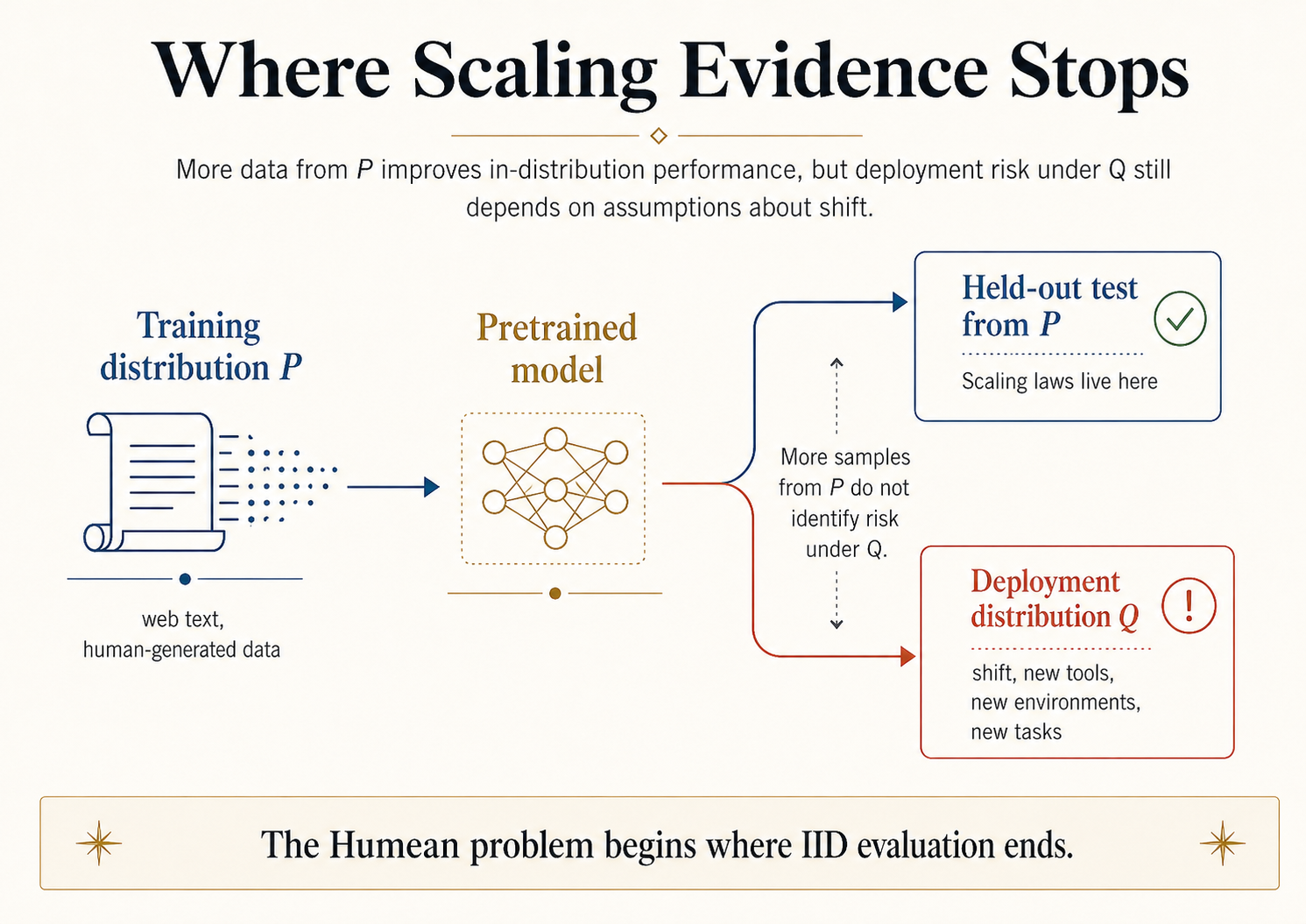

The evidence for scaling laws is strongest in the first row. Kaplan-style and Chinchilla-style results are about loss and performance under controlled training and evaluation regimes. They matter. They are not trivial. But they do not, by themselves, settle OOD generalization.

This is exactly where Hume becomes useful. Hume's problem is not "induction never works." Induction obviously works often enough for science, engineering, and daily life. The problem is that past success alone does not logically guarantee future success. A held-out test set is still part of a measurement regime. Performance under that regime does not automatically license confidence under a different regime.

Machine learning has its own version of this point. Recht et al. recreated ImageNet-like test sets and found that models suffered notable accuracy drops on the new sets, even though gains on the original benchmark still tended to transfer. The lesson is not that benchmarks are useless. It is that even carefully recreated evaluation conditions can expose a gap between nominal test performance and new-data performance. See Recht et al., 2019. OOD surveys make the broader point directly: the IID assumption often fails in real applications, and distribution shift can substantially degrade deployment performance. See Liu et al., 2021.

Statistical Translation

Let be the training distribution and be the deployment distribution. Scaling improves estimates and loss under , or under evaluation distributions close to . The deployment question is risk under :

More samples from do not identify unless we assume something connecting and . That connection might come from a stability assumption, an invariance assumption, a causal assumption, a simulator, an environment feedback loop, an architectural prior, or a validated monitoring process. The key point is simple: the assumption is doing real work. It should not be hidden inside the word "generalization."

This is the Humean pressure inside the AI scaling debate. Scaling helps. But scaling evidence has a domain. Once the model leaves that domain, the argument needs extra assumptions.

Five Concrete Failure Modes

Abstract scaling-versus-shift arguments are easy to nod along to. The point is sharper when read against specific AI failures the field has documented in the last few years.

Benchmark contamination. Many of the eye-catching benchmark gains on closed-book QA, math reasoning, and code generation can be partly attributed to test items leaking into pretraining corpora. When researchers reconstruct or paraphrase the same questions, scores often drop substantially. The model did not necessarily improve at the underlying skill; it improved at a measurement that overlapped with its training set. Scaling laws live cleanly in this leakage regime; deployment does not.

Tool-use agents under API drift. A coding or browsing agent that succeeds on a benchmark frozen at training time can fail when an underlying API, web layout, or tool surface changes. The agent learned the fixed surface, not the task. Within-distribution success was reliable; the deployment distribution shifted, and the held-out score did not predict it.

Medical model drift. Classifiers trained on imaging data from one hospital's scanners, patient population, and protocol have repeatedly degraded when deployed at a different hospital. The pixels look similar; the joint distribution of image, label, indication, and acquisition parameters does not. The deployment pipeline did not change the model; it just changed enough of the surrounding distribution to break the held-out score's transfer.

Coding agents passing tests, failing hidden specs. SWE-bench-style evaluations measure whether the agent's patch makes the test suite green. Production engineering measures something larger: maintainability, architectural fit, security, performance, code review by humans who care about consistency. An agent can satisfy the exact verifier and miss the implicit specification entirely. The verifier was the experimental variable that licensed the benchmark; the deployment problem includes specifications the verifier did not encode.

Out-of-distribution generalization in vision and language. Recht, Roelofs, Schmidt, and Shankar's careful reconstruction of ImageNet-like test sets exposed accuracy drops on the new sets even though improvements on the original benchmark still tended to transfer. The lesson was not that ImageNet is useless; it was that even carefully matched evaluation conditions can expose a gap between test performance and new-data performance.

These are not contrived counterexamples. They are the modal failure modes for production AI systems shipped in 2024 and 2025. The Humean point — past held-out scores do not by themselves license future deployment scores — is not abstract philosophy. It is a specific operational discipline that turns each of these failures into a question the team should have asked before shipping.

What "Experience" Can Mean in AI

Hide overviewShow overview

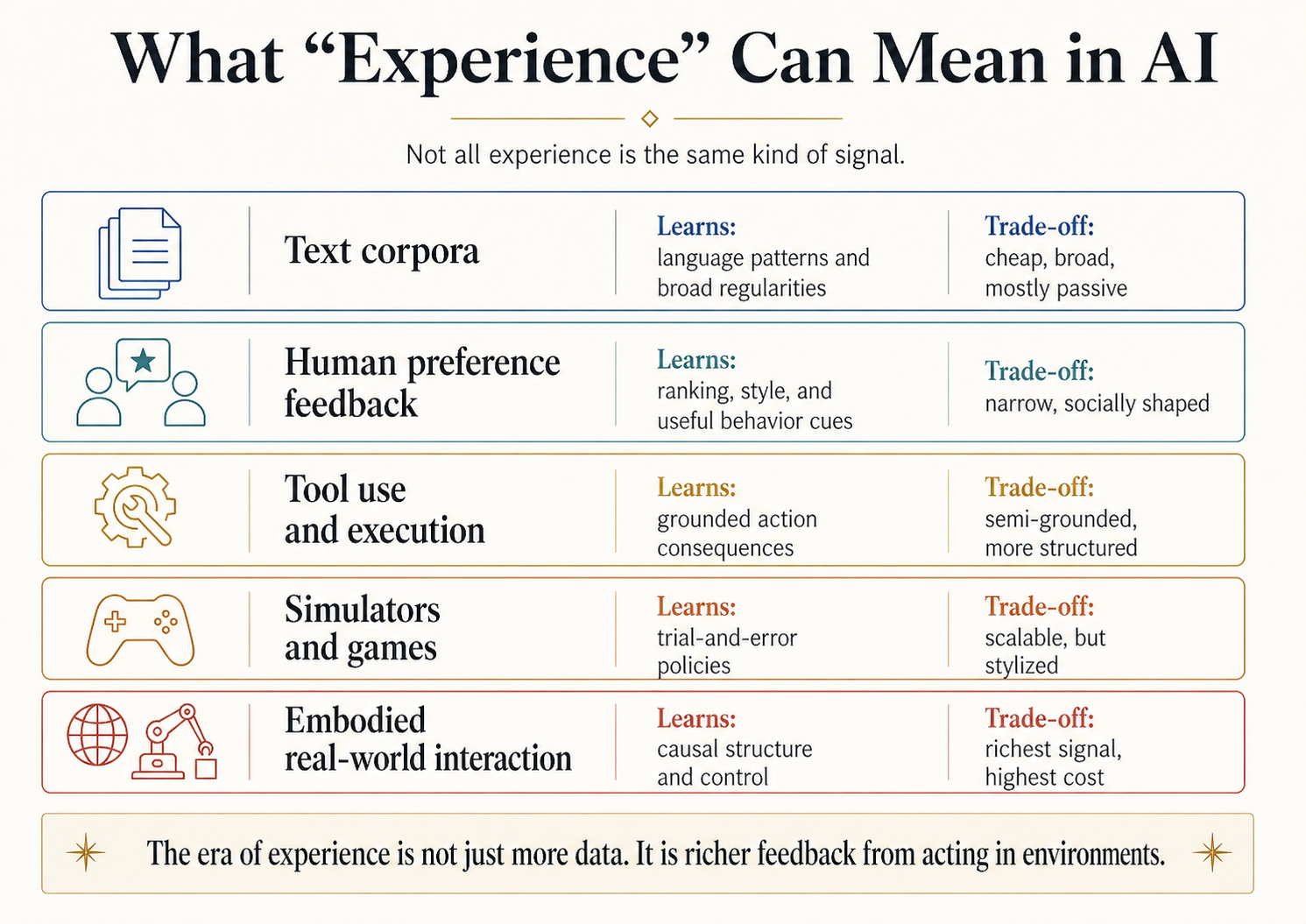

The word "experience" is overloaded. Locke, Hume, and Kant use it in one family of senses. Reinforcement learning researchers use it in another. LLM builders use it in another. Product teams use it in still another when they mean user feedback, logged interactions, and preference data.

A useful distinction:

| Signal type | What it teaches well | What it does not solve by itself |

|---|---|---|

| Text corpora | Language, facts, conventions, arguments, code patterns | Physical grounding, causal control, long-horizon consequence |

| Human preference feedback | Helpfulness, style, ranking, visible behaviour | Truth, causal structure, deep competence |

| Tool use and execution | Consequences of actions in structured digital environments | Broad transfer outside the tool / environment interface |

| Simulators and games | Trial-and-error policies, planning, exploration | Sim-to-real transfer, stylized objective mismatch |

| Embodied real-world interaction | Causal structure, control, sensorimotor grounding | Cost, safety, scalability, instrumentation |

This distinction matters because "the model needs experience" can mean several incompatible engineering programs. It might mean better RLHF. It might mean tool-augmented agents. It might mean formal environments for math and code. It might mean robotics. It might mean self-play. It might mean simulated economies. Those are not the same bet.

The Silver / Sutton argument is most interesting when read as a claim about self-improving feedback loops. Static human data is finite and backward-looking. Experiential data can, in principle, be generated by stronger agents acting in richer environments and creating new learning problems for themselves. That is a different kind of scaling law: not merely more tokens, but more consequential interaction.

The Humean caution still applies. Experience does not magically solve induction. An agent trained in one simulator may fail in another. A code agent trained on one benchmark may overfit the benchmark's style. A robot trained in a lab may fail in a kitchen. Experience helps only when the experience is connected to the deployment regime in the right way.

The Elenchus: Test the Claim Before Using It

The house discipline for this series is simple: before leaning on a modern claim, test it. The claim under test here is:

More data produces better generalization.

Question 1: Better generalization of what kind? If the claim means lower held-out loss on examples drawn from the same broad distribution as the training data, then the scaling-law evidence is strong. If it means reliable deployment under changed tools, incentives, environments, and user populations, the claim is not established by scaling-law evidence alone.

Question 2: More data of what kind? More human text is not the same as more tool-execution traces, more formal proofs, more simulator episodes, more real-world robot trajectories, or more preference comparisons. A Lockean analysis forces the source question: what kind of experience entered the model?

Question 3: What licenses the move from past success to future success? This is the Humean question. A model's past held-out performance does not, by itself, guarantee future performance under shift. The license must come from assumptions about distributional stability, invariance, causal structure, environment match, or architecture.

Question 4: What structure is doing the work? This is the Kantian question. No model is "data alone." Tokenization, architecture, objective, optimizer, context window, memory, retrieval, tool interface, reward model, and sampling procedure all impose structure. The data fills a form; it does not define the form from nothing.

Refined claim: more data, of the right kind, processed through a learner with suitable structure, can improve generalization within the regime where the data and structure connect to the deployment problem.

That refined claim is less exciting than the original. It is also much more useful.

A Sidebar on Self-Evaluation

An LLM can explain Hume's problem of induction. It can write a competent paragraph about Kant's categories. It cannot, by introspection, tell you whether its own answer on a new task is licensed by robust understanding, memorized resemblance, benchmark leakage, tool luck, or a brittle pattern learned during training.

This is not a mystical limitation. It is a measurement limitation. The model does not have privileged access to the causal story of its own reliability. Calibration, uncertainty estimates, adversarial tests, OOD evaluations, interpretability tools, and deployment monitoring are external disciplines. They are not replaced by a model saying "I am confident."

The practical rule is blunt: self-evaluation is useful as one signal, but it does not solve Hume's problem. A model cannot certify its own future reliability under distribution shift merely by producing a plausible self-description.

What Each Lens Picks Out

The Lockean lens asks: what entered the model?

An LLM trained mostly on web text inherits a distribution of written human output. That distribution is not the world. It is the world as filtered through what people wrote, translated, uploaded, licensed, scraped, selected, moderated, deduplicated, and weighted. This makes data curation philosophically central rather than merely operational. If the model lacks a kind of content, the first question is whether that content ever entered the training signal in a usable form.

The Humean lens asks: why should success continue?

A model that succeeds on yesterday's benchmark may fail on tomorrow's deployment. The Humean lesson is not skepticism for its own sake. It is assumption hygiene. Say what must remain stable. Say what can shift. Say which shift was tested. Say which shift was not tested. Do not smuggle deployment reliability into the phrase "generalization."

The Kantian lens asks: what form makes learning possible?

Every architecture is a wager. A transformer is a wager about sequence modelling and attention. A convolutional network is a wager about locality and translation. A graph neural network is a wager about relational structure. A world-model agent is a wager that predicting latent environment dynamics matters for planning. These wagers may be right or wrong for a task, but they are never absent.

| Lens | Best use in the essay | Modern artifact |

|---|---|---|

| Lockean | Audit the source of model content | Data cards, corpus documentation, curation policy, synthetic-data provenance |

| Humean | Audit the license for extrapolation | OOD tests, robustness suites, calibration, deployment monitoring |

| Kantian | Audit the structure of learning | Architecture choice, inductive bias, representation, world model, memory system |

The strongest version of the essay is not "Locke equals scaling, Hume equals experience, Kant equals architecture." That mapping is too cute. The stronger version is: modern AI arguments often fail because they answer one of these questions while pretending to answer all three.

Where the Analogy Breaks

This section is not optional. Without it, the essay becomes a cringe analogy machine.

First, Locke, Hume, and Kant were writing about human cognition. They were not writing about artificial systems trained by gradient descent. The Humean problem of induction is not identical to OOD failure in machine learning. It has the same shape: past cases do not automatically license future cases. Shape is not identity.

Second, Kantian categories are not transformer attention heads. Kant's claim is transcendental: certain structures are necessary for experience as such. Modern architecture claims are engineering claims: certain structures improve performance, sample efficiency, robustness, or planning under specific constraints. The family resemblance is useful. Treating it as equivalence is sloppy.

Third, "experience" changes meaning across contexts. Locke's experience is sensation and reflection. Silver and Sutton's experience is agent-environment interaction. RLHF preference data is another thing. Tool execution traces are another. Real-world embodied feedback is another. The essay should never slide between these senses without naming the slide.

Fourth, the bitter lesson is not naive empiricism. Sutton's argument is not "data without structure." It is that general methods that scale with computation have repeatedly beaten methods that encode brittle human assumptions. A Kantian reading can agree with that and still insist that the supposedly "general" method has structure.

The lens illuminates. It does not prove.

Why This Matters

Builders should care because this is a resource-allocation problem. When a system plateaus, you can spend the next dollar on more data, better data, richer feedback, better tools, better evaluations, architecture changes, or product constraints. Philosophy will not choose the knob for you. It will stop you from pretending all knobs are the same knob.

Researchers should care because the scaling debate is often misframed. The question is not whether scaling "works." It does. The question is what kind of performance scaling evidence licenses, and where the license stops. That is a Humean question wearing a benchmark chart.

Educators should care because the same split appears in teaching: should learners absorb more examples, encounter richer problems, or acquire better conceptual structure? The answer is usually "all three," but the binding constraint changes by learner, topic, and level.

FAQ

Is the bitter lesson Lockean?

It is Lockean in spirit, not in detail. The bitter lesson distrusts human hand-engineering and trusts methods that can exploit data and computation. Locke distrusts innate content and emphasizes experience as the source of ideas. The overlap is real, but not exact. Locke still assumes faculties of comparison, abstraction, and combination. No serious ML system is structureless either.

Is the era of experience Humean?

Partly. It is empiricist because it grounds learning in experience. But Hume's signature contribution is the problem of induction, not just empiricism. The era-of-experience thesis says richer interaction data may unlock stronger learning. The Humean question then asks whether those interactions license generalization to the next environment, the next task, or the next tool regime.

Is JEPA Kantian?

Only in a controlled sense. JEPA and world-model work are Kantian-flavoured because they emphasize representation and structure as prerequisites for useful prediction and planning. They are not Kantian in the full philosophical sense. Kant argues about necessary forms of experience. JEPA is an engineering and research program.

Are Bayesian priors Kantian?

They are related but not identical. Bayesian priors and Kantian categories are both contributions the agent brings to data. But Bayesian priors are usually defeasible and can be overwhelmed by enough evidence. Kantian categories are supposed to be conditions for experience itself. The comparison is useful, but only if that difference stays visible.

Series Note

This is the first essay in the Philosophy × AI sequence. The next essay, From Plato's Cave to the Era of Experience, uses Plato to separate representation, imitation, interaction, correction, and knowledge. The discipline is the same: philosophers are lenses, not mascots.

References

Primary philosophical texts:

- Locke, John. An Essay Concerning Human Understanding. London, 1689. Standard scholarly edition: Nidditch, ed., Oxford, 1975. Key passage: II.1.2.

- Hume, David. A Treatise of Human Nature. London, 1739. Standard scholarly edition: Norton and Norton, eds., Oxford, 2000. Key passage: 1.3.6.

- Hume, David. An Enquiry Concerning Human Understanding. London, 1748. Standard scholarly edition: Beauchamp, ed., Oxford, 1999. Key section: IV.

- Kant, Immanuel. Critique of Pure Reason. Riga, 1781; second edition, 1787. Standard English: Guyer and Wood, eds., Cambridge, 1998. Key passage: A51 / B75.

Modern AI sources:

- Sutton, Richard. "The Bitter Lesson." March 13, 2019.

- Kaplan, Jared, et al. "Scaling Laws for Neural Language Models." arXiv:2001.08361, 2020.

- Hoffmann, Jordan, et al. "Training Compute-Optimal Large Language Models." arXiv:2203.15556, 2022.

- Silver, David, and Richard S. Sutton. "Welcome to the Era of Experience." Preprint chapter for Designing an Intelligence, MIT Press, 2025.

- LeCun, Yann. "A Path Towards Autonomous Machine Intelligence." OpenReview position paper, 2022.

- Vaswani, Ashish, et al. "Attention Is All You Need." arXiv:1706.03762, 2017.

- Recht, Benjamin, Rebecca Roelofs, Ludwig Schmidt, and Vaishaal Shankar. "Do ImageNet Classifiers Generalize to ImageNet?" Proceedings of Machine Learning Research, 97:5389-5400, 2019.

- Liu, Jiashuo, et al. "Towards Out-Of-Distribution Generalization: A Survey." arXiv:2108.13624, 2021.

Standard scholarly references:

- "John Locke." Stanford Encyclopedia of Philosophy.

- "David Hume." Stanford Encyclopedia of Philosophy.

- "Immanuel Kant." Stanford Encyclopedia of Philosophy.

- "The Problem of Induction." Stanford Encyclopedia of Philosophy.