Hide overviewShow overview

A Concrete System, Four Different Audits

Imagine a national grocery chain rolls out an AI hiring system to screen applicants for warehouse supervisor positions. Same model, same training data, same audit dashboard across every distribution center.

Run the four lenses in sequence and they audit different things:

- Rawls. Does the system improve the position, opportunity, and self-respect of the least advantaged applicants? A demographic-parity check on the model is part of an answer. It is not the whole answer. Rawls wants to know whether the surrounding institutions (entry-level wage floor, internal promotion ladder, union representation, recourse against rejection) preserve fair equality of opportunity for people whose labour-market position is already weak.

- Foucault. What new records does the system create, what behaviours does it reward, what gets normalized? An AI screen converts a one-page resume and an interview into a continuous score, a probability, a confidence interval, an explanation, an audit log. That score outlives the hiring decision. It feeds future allocation, monitoring, and termination systems. The Foucauldian question is not "is the score accurate?" It is: what is the score being used to do, and to whom?

- Marx. Who benefits if hiring becomes cheaper, faster, and more standardized? In one direction, the firm captures the productivity gain through lower per-hire cost and faster fill rates. In another direction, the workforce becomes more substitutable: standardized scoring narrows the band of applicant features that count, weakens individual bargaining position, and shifts more of the hiring surplus to capital and to vendor licensing. The fairness dashboard does not settle this allocation. The wage agreement does.

- Beauvoir. Who can realistically contest a rejection, reskill, relocate, or absorb the cost of a wrong call? A college student rejected from a warehouse-supervisor role has different downside than a single parent in the same town with a mortgage and dependents. Equal exposure to the system is not equal risk under it. Beauvoir's contribution is that situated freedom is not exchangeable with formal equal access.

Four lenses, four audits, four different recommendations, one system. The fairness dashboard answers part of the Rawlsian question and almost none of the others.

That is the spine of this essay.

The AI Economy Is Not One Problem

The laziest version of the AI economy debate asks one question: will AI take our jobs?

That question matters, but it is too blunt. AI does not enter the economy as a single machine doing one thing. It enters as a bundle of systems that classify applicants, allocate tasks, write code, monitor workers, draft emails, price risk, filter customers, summarize meetings, generate media, assist doctors, score students, and reorganize firms around compute, data, and software. The economic question is not just how many jobs are lost. It is also who gains leverage, who becomes measurable, who loses discretion, who owns the productive system, who gets the surplus, and who is forced to adapt from an already weaker position.

The scale is no longer hypothetical. The IMF estimated in 2024 that almost 40 percent of global employment is exposed to AI, with about 60 percent exposure in advanced economies.1 The ILO's 2025 refined global exposure index found that clerical occupations remain among the highest-exposure categories, while some digitized professional and technical jobs have become more exposed as generative AI capabilities have expanded.2 The Stanford AI Index reported that global corporate AI investment more than doubled in 2025, with private investment growing fastest and generative AI capturing a large share of that surge.3 Meanwhile, the EU AI Act treats many AI systems used in recruitment, worker management, task allocation, promotion, termination, and performance monitoring as high-risk systems.4

That is the live background. But this essay is not a policy brief. It is the first half of a two-essay arc inside the Philosophy × AI series. The previous essays used Locke, Hume, and Kant to separate data source, inductive license, and architectural form, and used Plato's Cave to separate representation, imitation, interaction, correction, and knowledge. This essay uses Rawls, Foucault, Marx, and Beauvoir to separate fairness, power, labour, and situated freedom.

The thesis is simple:

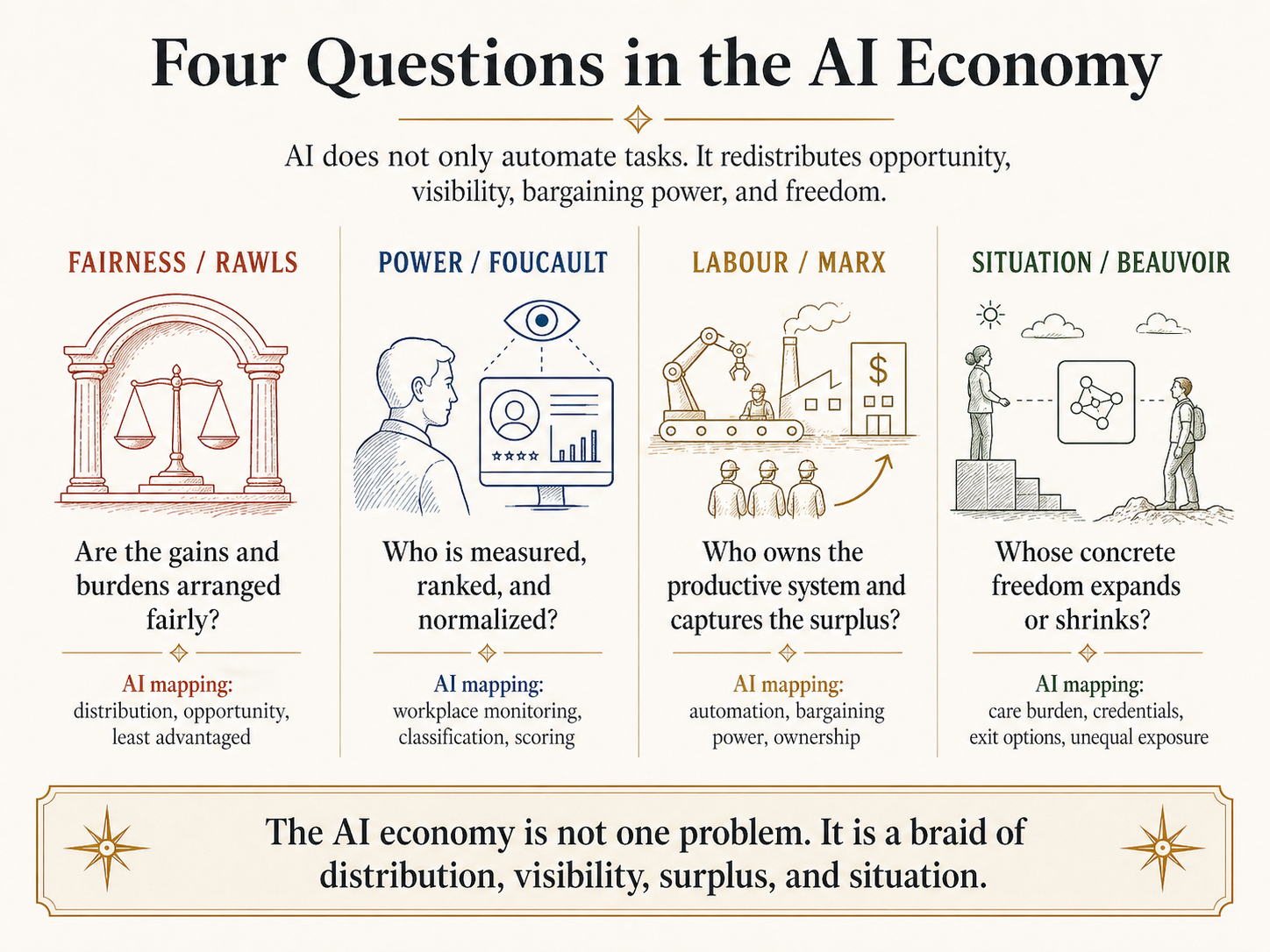

The AI economy is not one ethical problem. It is four problems braided together: distribution, visibility, surplus, and situation.

Rawls asks whether the gains and burdens of AI are arranged fairly in the basic structure of society. Foucault asks how AI systems make people visible, comparable, normalizable, and governable. Marx asks who owns the productive machinery and who captures the surplus produced with it. Beauvoir asks whose freedom is expanded or narrowed in concrete social situations, especially when people enter the transition from unequal starting positions.

None of these thinkers predicted AI. Treating them as if they did would be false. The point is sharper: they give us four instruments for refusing vague AI ethics. A fairness metric is not a theory of justice. A productivity gain is not a labour settlement. A dashboard is not accountability. An "augmented" worker is not necessarily a freer worker.

This essay sets up the four lenses and shows where local fairness metrics stop being able to answer the harder questions. The second essay, AI Productivity and the Distribution Question, runs an explicit elenchus on the productivity-makes-society-better claim, applies each lens in detail, and turns to what builders and policymakers can actually do.

Four Lenses, Not Four Slogans

Rawls is the fairness lens, but not in the narrow machine learning sense. In A Theory of Justice and later work, Rawls asks how the basic structure of society should distribute rights, opportunities, powers, income, wealth, and the social bases of self-respect. His famous difference principle says, roughly, that social and economic inequalities should be arranged to the greatest benefit of the least advantaged, while preserving equal basic liberties and fair equality of opportunity.5 That is not the same as saying a hiring classifier should satisfy demographic parity or equalized odds. Those metrics may matter. They are not the whole question. Rawls forces the harder question: does the AI-driven economy make the least advantaged more secure, more respected, and more capable of participating as equals?

Foucault is the power lens. In Discipline and Punish and the lectures around governmentality, he studies institutions that observe, document, rank, normalize, and correct people. The exam, the record, the case file, the timetable, the inspection, the norm: these are not neutral containers for information. They are techniques of power. The Stanford Encyclopedia summary of Foucault is useful here: in knowing people, institutions control them; in controlling people, institutions produce knowledge about them.6 Workplace AI fits this almost too cleanly. A model that predicts attrition, flags low productivity, scores job candidates, ranks driver performance, or detects "engagement" does not merely help management see. It helps define what counts as a worker worth seeing.

Marx is the labour and ownership lens. He is not useful because he gives a one-line prophecy that machines replace workers. He is useful because he asks who owns the productive apparatus, who sells labour power, who controls the labour process, and who captures surplus. In Marx's account of capitalism, profit is tied to the appropriation of surplus labour, and machinery changes the balance of power between capital and workers.7 AI is not magic labourless production. It is built on engineers, researchers, content, annotators, data-center construction, energy systems, platform users, domain experts, human feedback, and the accumulated work embedded in public and private data. When deployed, it can assist workers, replace tasks, deskill roles, intensify monitoring, or shift bargaining power. The Marxian question is not "is AI good or bad?" It is: who controls the system, and who receives the gains?

Beauvoir is the situation lens. In The Second Sex and The Ethics of Ambiguity, she insists that freedom is lived from within concrete social conditions. A person is not an abstract unit dropped into an economy with equal power to adapt. People are gendered, classed, raced, credentialed, encumbered by care responsibilities, dependent on wages, and shaped by norms that make some choices easier than others. Beauvoir's feminist argument is not simply that women should be included in the same competition. It is that the structure of the situation can make a nominally free choice unfree in practice.8 This matters for AI because labour exposure is not evenly distributed. The ILO's earlier global analysis already emphasized that generative AI exposure is strongly shaped by occupational structure and can be highly gendered, especially where clerical work is a major source of female employment.9

Put bluntly: Rawls asks whether AI's gains are fair. Foucault asks what AI makes visible and controllable. Marx asks who owns and profits. Beauvoir asks whose concrete freedom is expanded or shrunk.

| Lens | Core question | AI-economy version | Common failure if ignored |

|---|---|---|---|

| Rawls | Are inequalities arranged fairly? | Do AI gains improve the position, opportunity, and self-respect of the least advantaged? | Calling a system "fair" because a metric improved |

| Foucault | How does knowledge become power? | Who is measured, ranked, watched, normalized, and made into a case? | Treating surveillance as mere productivity tooling |

| Marx | Who owns production and captures surplus? | Do AI gains flow to labour, users, capital owners, or platform gatekeepers? | Treating productivity as if distribution were automatic |

| Beauvoir | What happens to situated freedom? | Whose agency is expanded, and whose choices narrow under automation or augmentation? | Treating all workers as equally able to adapt |

This is the right level of analysis. Anything softer collapses into "AI ethics" wallpaper.

Where the Lenses Argue

Rawls, Foucault, Marx, and Beauvoir do not form a consulting-framework quadrant. They were not assembled to agree. Reading them as four slots on a dashboard hides what makes the apparatus useful, which is that the lenses pull against each other and force the analyst to choose. Three live disagreements, in increasing sharpness:

Rawls vs Marx: is the basic structure fixable, or is ownership the problem? Rawls's project assumes that the basic structure of a society can in principle be reformed to satisfy fair equality of opportunity and the difference principle without abolishing private ownership of capital. Marx's reading is that the same productive system the Rawlsian wants to make fair is the system that produces the inequality the Rawlsian wants to fix; reform-from-the-inside hits a ceiling because the firm's owners control the surplus and the rules. Applied to AI: a Rawlsian welcomes wage insurance, retraining, public AI infrastructure, antitrust, and bias audits as legitimate reforms of the basic structure. A Marxian reads the same package as managerial Keynesianism that stabilizes capital's grip on the means of AI production. Both can be right at the same time about different parts of the question. Treating them as agreeing on "fairness" is the mistake.

Foucault vs Rawls: is institutional rationalism the cure or the diagnosis? Foucault's whole project is a long argument against the assumption that more rational institutions, more transparent measurement, and more public-justification procedures are unambiguously good. The exam, the case file, the audit, the model card, and the institutional review board are technologies of power, not neutral tools. The Rawlsian habit of reaching for "well-designed institutions" reads, from a Foucauldian angle, as a faith that more procedure equals more legitimacy. A Foucauldian-friendly AI policy will sometimes prefer less measurement, less institutional documentation, less contestable-decision infrastructure, on the grounds that those technologies extend governmental visibility into life. A Rawlsian will see exactly the same minimalism as failure to provide due process. That is a real disagreement; the essay is not pretending it is a balance.

Marx vs Beauvoir: is the unit of analysis class, or situation? Both reject the abstract worker, but for different reasons. Marx's argument tends to treat class position as the dominant explanatory variable: the proletarian, the petite-bourgeois, the rentier. Beauvoir insists that gendered, racialized, embodied, and care-laden situations cut across class and produce material constraints that a class analysis on its own under-describes. Applied to AI: a strict Marxian reading of warehouse-monitoring software emphasizes the worker-versus-capital frame; a Beauvoir-inflected reading insists that the same monitoring lands differently on a single mother of three than on a college-student worker, even when both are technically in the same class position with the same surplus claim. The two readings produce different policy priorities.

A small grid that summarizes the three live tensions:

| Tension | What Rawls / Marx / Foucault / Beauvoir actually disagree about | Where AI-policy debates land on the disagreement |

|---|---|---|

| Reform vs ownership | Whether the basic structure can be made just without changing who owns the productive apparatus | Wage-insurance / retraining / public-procurement (Rawls-leaning) vs cooperative ownership / antitrust / public-AI infrastructure (Marx-leaning) |

| Measurement as legitimacy | Whether more institutional documentation is a fix or part of the problem | "Audit everything, contestability everywhere" (Rawls-leaning) vs "shrink the surface of measured life" (Foucault-leaning) |

| Class vs situation | Whether class position is the binding variable or one among several | Sectoral labour policy (Marx-leaning) vs care-economy / disability / migration-status policy (Beauvoir-leaning) |

The four-lens framework is useful because each lens forces a different audit, not because they reach a consensus. A working analysis of any concrete AI system should expect the lenses to arrive at different recommendations and should be honest about which lens is being privileged in the final call. The second essay in this pair (AI Productivity and the Distribution Question) runs all four through one concrete domain — call-center AI — and the call is itself partial: the four lenses do not converge on a single verdict.

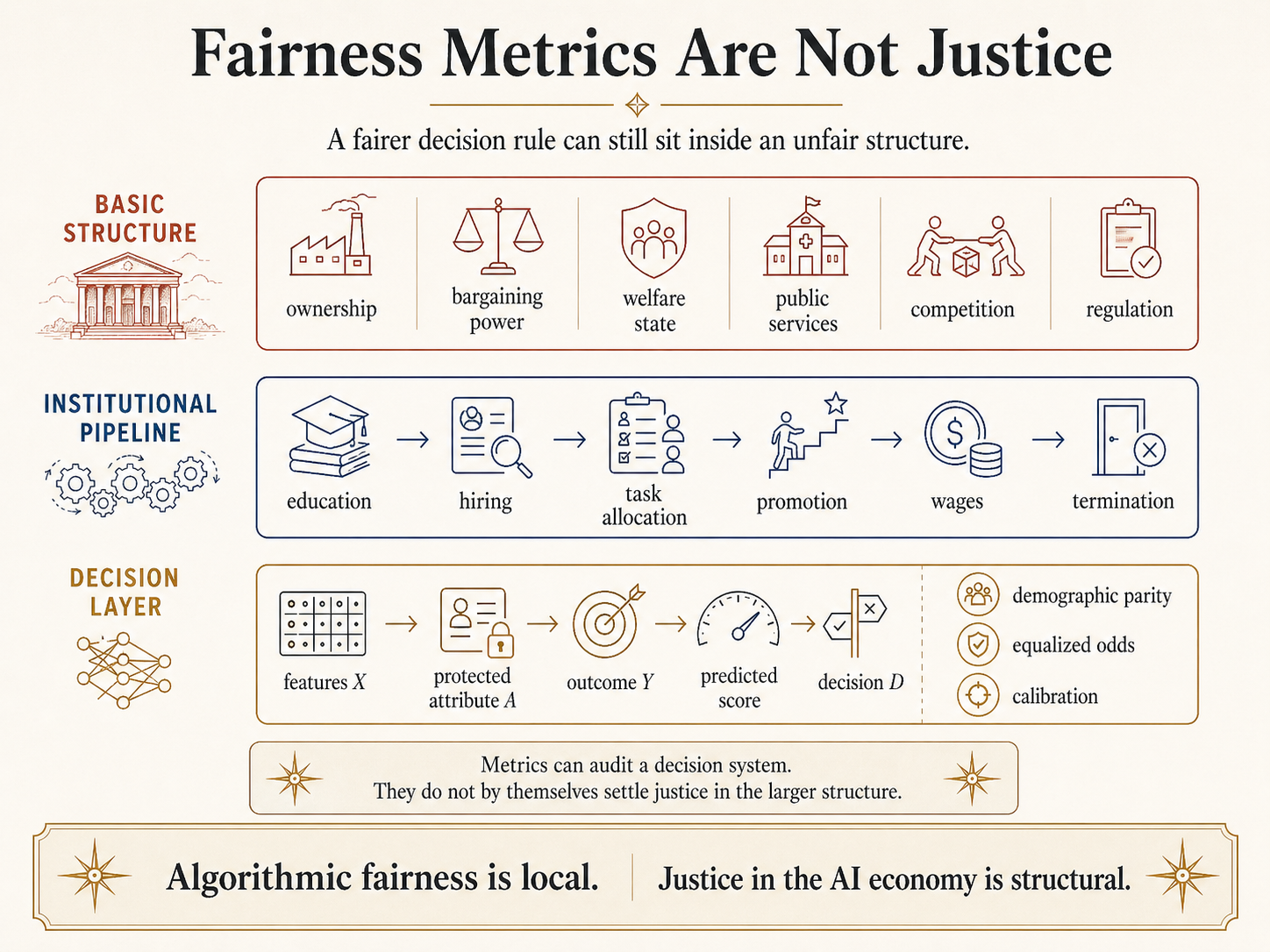

A Statistical Translation: Fairness Metrics Are Not Justice

Modern machine learning often translates fairness into constraints on a decision rule. Let be a protected attribute, a target or outcome, observed features, a model score, and a decision. A hiring system, for example, might try to satisfy a constraint such as

or an error-rate condition such as

Those constraints are not meaningless. They can catch real harms. They can make discrimination harder to hide. They are part of a serious audit.

But they do not answer Rawls's question. Rawls is not asking only whether one decision rule treats groups symmetrically. He is asking whether the institutions that distribute opportunities, income, status, education, bargaining power, and self-respect can be justified to free and equal citizens. You can satisfy a local fairness metric while preserving an unjust labour market. You can remove a protected attribute while keeping proxies that reproduce structural inequality. You can equalize false positive rates while the entire job pipeline becomes more precarious.

They also do not answer Foucault's question. Foucault asks why this decision is being automated, why these features are recorded, why this behaviour is treated as a signal, who can inspect the record, who can contest the category, and how people change their conduct when they know they are continuously scored.

They do not answer Marx's question either. Suppose AI raises output by . The economic fight is over the allocation function:

A fairness dashboard for the model does not determine that allocation. Neither does aggregate productivity. It is decided by ownership, contracts, market power, labour institutions, taxes, procurement, regulation, and bargaining.

Finally, fairness metrics do not answer Beauvoir's question. Two workers can face the same AI tool but not the same situation. One has savings, credentials, mobility, and no care burden. Another has unstable hours, dependents, local job constraints, and a role whose tasks are easiest to automate. Equal exposure is not equal risk. Equal access is not equal freedom.

This is the technical heart of the essay:

Algorithmic fairness is a local property of a decision system. Justice in the AI economy is a structural property of the institutions around that system.

If you are building AI systems, do not confuse the two. A fairness metric is a necessary instrument in some contexts. It is not a moral operating system.

Hide overviewShow overview

What Comes Next

This essay set up the four lenses and showed where local fairness metrics stop being able to answer the questions Rawls, Foucault, Marx, and Beauvoir actually pose. The second essay, AI Productivity and the Distribution Question, runs an explicit elenchus on the strong claim that AI productivity will automatically make society better off, applies each lens in depth (Rawls against dashboard ethics; Foucault on workplace visibility; Marx on the labour stack and surplus; Beauvoir on the unequal burden of adaptation), and turns to what builders and policymakers can actually do.

Read this essay first if you want the framework. Read the second if you want the verdict and the practical recommendations.

Sources

Primary philosophical texts:

- Rawls, John. A Theory of Justice. Harvard University Press, 1971; revised edition 1999.

- Rawls, John. Justice as Fairness: A Restatement. Harvard University Press, 2001.

- Foucault, Michel. Discipline and Punish: The Birth of the Prison. 1975; English translation 1977.

- Foucault, Michel. The Birth of Biopolitics: Lectures at the Collège de France, 1978-1979. English translation 2008.

- Marx, Karl. Capital, Volume I. 1867.

- Beauvoir, Simone de. The Second Sex. 1949.

- Beauvoir, Simone de. The Ethics of Ambiguity. 1947.

Standard scholarly references:

- "John Rawls." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/rawls/

- "Michel Foucault." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/foucault/

- "Karl Marx." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/marx/

- "Simone de Beauvoir." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/beauvoir/

Modern AI / labour / governance sources:

- IMF. "Gen-AI: Artificial Intelligence and the Future of Work." Staff Discussion Note, 2024.

- ILO. "Generative AI and Jobs: A Refined Global Index of Occupational Exposure." 2025.

- Stanford HAI. "2026 AI Index Report: Economy." 2026.

- European Union. "Artificial Intelligence Act, Regulation (EU) 2024/1689," Annex III.

- Barocas, S., Hardt, M., and Narayanan, A. Fairness and Machine Learning: Limitations and Opportunities. 2023. https://fairmlbook.org/

Internal Links

- PhilosophyPath:

- empiricism-induction-and-llm-limits for the empiricism essay (Locke, Hume, Kant).

- platos-cave-and-the-era-of-experience for the Plato x AI essay.

- ai-productivity-and-the-distribution-question for the second half of this argument.

- TheoremPath:

- algorithmic-fairness (forthcoming).

- calibration-and-uncertainty; proper-scoring-rules.

Footnotes

-

IMF, "Gen-AI: Artificial Intelligence and the Future of Work," 2024. The report estimates almost 40 percent global employment exposure to AI and about 60 percent in advanced economies. ↩

-

ILO, "Generative AI and Jobs: A Refined Global Index of Occupational Exposure," 20 May 2025. The report updates the 2023 global exposure index and finds clerical occupations remain among the highest-exposure categories. ↩

-

Stanford HAI, "2026 AI Index Report: Economy." The economy chapter reports that global corporate AI investment more than doubled in 2025, with private investment growing fastest. ↩

-

EU AI Act, Regulation (EU) 2024/1689, Annex III. The high-risk categories include AI used for recruitment, selection, promotion, termination, task allocation, and monitoring/evaluation of workers. ↩

-

See the Stanford Encyclopedia of Philosophy entries on Rawls and the original position, especially the supplement on the difference principle. https://plato.stanford.edu/entries/rawls/ ↩

-

See the Stanford Encyclopedia of Philosophy entry on Foucault, especially the discussion of examination, normalization, documentation, and power/knowledge. https://plato.stanford.edu/entries/foucault/ ↩

-

See the Stanford Encyclopedia of Philosophy entry on Marx, especially the discussion of labour power, surplus labour, and exploitation. https://plato.stanford.edu/entries/marx/ ↩

-

See the Stanford Encyclopedia of Philosophy entry on Simone de Beauvoir, especially the discussion of situation, freedom, patriarchy, and women's oppression. https://plato.stanford.edu/entries/beauvoir/ ↩

-

ILO Working Paper 96, "Generative AI and Jobs: A Global Analysis of Potential Effects on Job Quantity and Quality," 2023. ↩