The Surplus-Routing Thesis

The clean version of the AI-productivity story is that better tools raise output, output raises welfare, and the ethical task is to keep the tools accurate and broadly available. The thesis of this essay is that the productivity step is real, the welfare step is contingent, and the institutional step is where the action lives.

AI is not just a productivity tool. It is a surplus-routing mechanism.

For every productivity gain, the actual economic question is the routing function: who captures the gain through wages, margins, vendor licensing, lower prices, reduced headcount, cloud and API rents, executive compensation, dividends, taxes, or public services. AI does not write that routing function. Ownership, contracts, market power, labour institutions, regulation, and bargaining write it. The model is an input.

A second routing question runs alongside the first: who loses bargaining power because expert knowledge that used to live in workers has been encoded into a tool. A junior coder with a strong assistant ships more code; a senior coder whose decade of judgment has been distilled into the same assistant has lost a wage premium. A radiologist whose images get a high-quality second read may keep the case load and the salary; a translator whose rate collapses because clients now expect machine-assisted discounts has lost the price floor. The productivity number does not show this. The wage data shows it later.

This essay tests the optimistic claim, then applies the four lenses (Rawls, Foucault, Marx, Beauvoir) to the routing problem. It is the second half of an argument; the first half, Four Lenses on the AI Economy, set up the analytical apparatus. Algorithmic fairness is a property of a decision rule. Justice in the AI economy is a property of the institutions around the rule.

Two Cases, Three Routings

Concrete first. Take two AI deployments where the productivity claim is real and the surplus question is open.

Case A: a generative-AI customer-support assistant. Brynjolfsson, Li, and Raymond's randomized rollout to 5,172 agents at one US software firm produced a 14 percent average increase in issues resolved per hour, with nearly all of the gain concentrated among lower-skill agents.1 The headline is real. The headline is also silent on where the surplus went.

Case B: AI coding assistants in a software company. Field measurements vary by task, language, and seniority, but several deployments have reported 10 to 40 percent throughput gains on specific, well-scoped subtasks (boilerplate, unit tests, refactors), with smaller or no gains on architectural design and on hidden-spec problems. The headline is contested. The surplus question is identical: same productivity question, different routing options.

For each case, three plausible institutional arrangements route the surplus differently:

| Routing | Customer-support case | Coding-assistant case |

|---|---|---|

| Capital-first | Firm reduces headcount at next attrition cycle, pockets margin, AI vendor takes a per-seat license fee. Workers see no wage change. | Firm hires fewer juniors; senior compensation flat; AI vendor and cloud provider extract rents proportional to inference. The encoded expertise of senior engineers is now a feature of the tool, not a wage premium. |

| Worker-first | Wages rise in proportion to productivity gain; assistant logs are explicitly excluded from performance management; promotion paths to senior tiers are kept funded. | Compensation tied to per-engineer output continues to rise; junior-to-senior apprenticeship is funded as an explicit firm investment because mentorship survives only when training cost is acknowledged. |

| Customer-first | Firm cuts subscription prices, capturing user welfare; agent headcount is held constant; vendor margin is squeezed. | Software prices fall, end users benefit, firm-side margin is held flat; engineer compensation rises slowly. |

The three columns are not consensus or compromise. They are mutually exclusive distributional outcomes from the same productivity number.

What decides which column happens is not the model. It is contracts (what the seat license costs, what the labour agreement permits), market power (whether the firm is a monopsonist for the worker, whether the customer can switch), regulation (whether displaced workers get notice, whether AI logs can be used in termination decisions), and bargaining strength (whether workers can refuse, whether customers can pool demand, whether antitrust pressure caps vendor rents).

The rest of this essay is the argument that those institutional variables are doing the real work, and that the philosophical lenses (Rawls, Foucault, Marx, Beauvoir) are useful precisely because each one names a different routing axis the model cannot address.

The Elenchus: Does AI Productivity Make Society Fairer?

The claim under test is the optimistic productivity claim:

If AI raises productivity, society becomes better off, and the main ethical task is to make the tools accurate, unbiased, and broadly available.

That claim is not stupid. A lot of progress does come from tools that make useful work cheaper. The problem is that the claim is underspecified.

Initial definition. "Productivity" means more output per unit of input, or a reduction in the cost of producing a given output. "Society becomes better off" means not merely that total output rises, but that people can reasonably regard the new arrangement as an improvement in their lives. "Fairer" means that the gains and burdens are arranged in a way that preserves equal citizenship, fair opportunity, and respect for those most exposed to the downside.

Question 1. Does AI raise productivity in some settings? Yes. The evidence is already real, though not universal. Brynjolfsson, Li, and Raymond studied the staggered rollout of a generative AI assistant to more than 5,000 customer support agents and found an average productivity increase, with larger gains for novice and lower-skilled workers than for experienced workers.2 That is a serious result. It also shows heterogeneity immediately: AI did not affect all workers the same way.

Question 2. Does productivity imply fair distribution? No. This is Rawls's objection. A productivity increase can lower prices, raise wages, increase profits, reduce headcount, intensify work, or finance public goods. Which of those happens is not decided by the model. It is decided by institutions and bargaining power. A society can become richer while the least advantaged become less secure, less respected, or less able to participate as equals.

Question 3. Does making the model less biased solve the economic problem? No. Bias mitigation can improve a decision system, especially in hiring, credit, education, and essential services. But it does not settle who owns the model, who has recourse, who is displaced, who is monitored, or who captures the surplus. A less biased workplace surveillance system may still be a workplace surveillance system.

Question 4. Does augmentation solve the labour problem? Not automatically. Augmentation can make workers more capable. It can also make them more replaceable by encoding expert practice into a tool, lowering training costs for substitutes, narrowing discretion, and making work more measurable. The worker who is "augmented" may gain skill, or may become a human checkpoint in a process designed elsewhere.

Question 5. Is exposure the same as replacement? No. This is where sloppy labour-market commentary goes wrong. Exposure means tasks in an occupation overlap with AI capabilities. It does not mean the occupation disappears. The ILO's work stresses this distinction, and the IMF separates exposure from complementarity: some exposed jobs are more likely to be helped by AI, while others are more vulnerable to displacement or reduced labour demand.34 But "not replacement" is not the same as "nothing to worry about." A role can survive while wages, status, autonomy, promotion paths, and bargaining power degrade.

Question 6. What hidden assumption does the optimistic claim make? It assumes that markets and firms will convert AI productivity into broadly shared human benefit. That is an institutional assumption, not a technical fact. Acemoglu's macroeconomic analysis is useful precisely because it pushes against hype: task-level improvements may produce nontrivial but modest aggregate productivity gains, and AI can widen the gap between capital and labour income even when some tasks become more productive.5

Refined claim. AI productivity can improve society when the institutions around the technology convert productivity into lower costs, better services, higher wages, reduced drudgery, stronger worker agency, and better opportunity for the least advantaged. Without those institutions, productivity can just as easily concentrate returns, expand surveillance, weaken labour, and harden unequal starting positions.

Takeaway. Do not ask only whether the model works. Ask who benefits when it works.

Fairness: Rawls Against Dashboard Ethics

Rawls is useful because he blocks the cheap move from "the model is fairer than the old process" to "the system is just."

Imagine a company replaces human résumé screening with an AI system. The new model is audited. It has lower measured group disparities than the previous human process. It explains which skills matter. It reduces time-to-hire. It looks, on the dashboard, like an ethical win.

Rawls would not stop there. He would ask where the job ladder comes from, who had access to the credentials the model rewards, what happens to applicants rejected by a brittle proxy, whether appeals exist, whether low-status workers gain real opportunity, and whether the institution as a whole can be justified to those with the least power inside it. The model may be an improvement. But the unit of justice is larger than the model.

This matters because AI ethics has a strong temptation toward metric capture. The system gets a fairness score, a bias score, a privacy checklist, a model card, a red-team report, and a risk rating. Those can be valuable. But once they become substitutes for institutional judgment, they shrink the moral question to what the organization already knows how to measure.

Rawls's basic-structure view resists that shrinkage. The AI economy is not just a pile of local decisions. It is an arrangement of education systems, labour markets, procurement rules, intellectual-property regimes, compute infrastructure, public services, corporate ownership, taxation, and safety nets. The difference principle does not say every AI firm must be equal. It says that inequalities need justification from the standpoint of the least advantaged. If frontier AI creates large profits, large rents, and large productivity advantages, the Rawlsian question is whether those gains improve the life chances and social standing of those least positioned to capture them.

A Rawlsian AI policy does not stop at bias audits. It asks about access to AI tools in public schools, retraining that is actually usable by workers with families, procurement that does not lock public agencies into opaque vendors, wage insurance, contestable automated decisions, antitrust, public digital infrastructure, and whether AI-enabled productivity gains show up in wages, service quality, and reduced burdens rather than only in margins.

For builders, the Rawlsian lesson is direct:

A locally fair model deployed into an unfair pipeline can become a cleaner way of reproducing the pipeline.

That does not mean you should ignore model fairness. It means you should know what layer your fairness claim actually covers.

Power: Foucault and the New Workplace Visibility

Foucault's value is that he makes "visibility" morally serious.

A workplace AI system can do more than automate a task. It can turn work into a continuous record. It can rank employees, classify behaviours, infer mood, predict attrition, allocate shifts, flag anomalies, detect low engagement, summarize calls, score tone, and decide which cases deserve escalation. Some of this can help. Some of it can be abusive. The important point is that it changes the field of power.

Foucault's examination is the right analogy. The exam does not only reveal whether a student knows the material. It creates a documented individual: a grade, rank, profile, trajectory, risk, case. Once the worker becomes a case, management can compare, intervene, normalize, and optimize. The worker may never know exactly how the profile is built. They may adjust behaviour pre-emptively because the possibility of being scored is always present.

That is why "the model is accurate" is not enough. Accurate measurement can still be oppressive when the wrong thing is measured, when measurement is continuous, when workers cannot contest the record, when inference exceeds consent, or when the system converts every deviation from average into a management event.

The EU AI Act's treatment of employment and worker-management AI as high-risk is not an accident. Systems used for recruitment, selection, promotion, termination, task allocation based on individual behaviour or traits, and worker monitoring affect livelihoods, rights, and career prospects.6 A model in this setting is not just a productivity tool. It is part of a governance system.

Foucault also prevents a naive answer. The issue is not simply "surveillance bad, transparency good." Some measurement protects workers. Safety monitoring can save lives. Bias audits require data. Wage theft enforcement requires records. Accountability requires visibility.

The question is: visibility for whom, under what rules, with what rights of contestation, and with what limits?

A worker-visible system that lets employees inspect scheduling logic, challenge errors, document unsafe conditions, and prove unpaid work is very different from a management-visible system that silently scores "attitude" and "culture fit." Both are data systems. Only one increases worker power.

For AI builders, the Foucault test is brutal and useful:

| Design question | Bad answer | Better answer |

|---|---|---|

| Who is made visible? | "Users/workers." | Specific roles, groups, and edge cases are named. |

| What is inferred? | "Performance." | The exact behavioural proxies and uncertainty are documented. |

| Who sees the record? | "Authorized admins." | Access is role-limited, logged, and reviewable. |

| How can it be contested? | "Contact support." | There is a real appeal path with human authority. |

| What cannot be measured? | "Everything useful." | Some domains are explicitly off-limits. |

If there is no contestability, do not call it accountability. Call it control.

Labour: Marx and the Ownership Question

Marx's strongest contribution here is not a prediction about mass unemployment. It is the insistence that technology enters a class relation.

A model is not deployed into a neutral economy. It is deployed into firms with owners, managers, workers, contractors, users, suppliers, creditors, and regulators. The model may raise output. But the split of that output is not decided by the model's loss curve. It is decided by contracts, ownership, market structure, law, unions, switching costs, intellectual property, and the ability of workers to refuse.

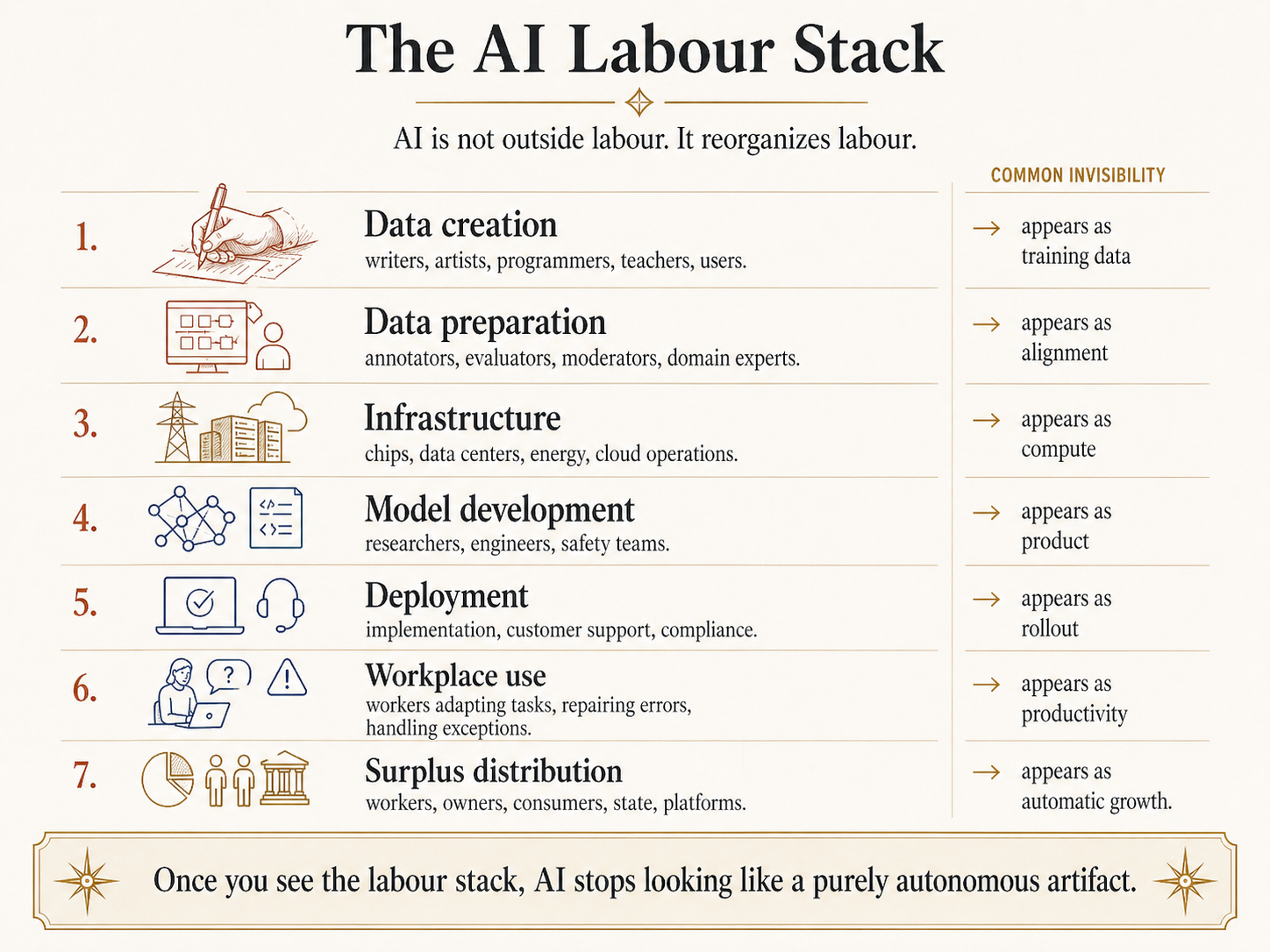

AI also exposes the mistake of imagining technology as cleanly separate from labour. The AI stack is full of labour:

| Layer | Labour involved | Common invisibility |

|---|---|---|

| Data creation | Writers, artists, programmers, teachers, scientists, forum users, journalists, translators | Their work appears as "training data" |

| Data preparation | Annotators, labelers, moderators, evaluators, domain experts | Their judgment appears as "alignment" |

| Infrastructure | Chip fabrication, data centers, energy, networking, cloud operations | Compute appears as abstract capacity |

| Model development | Researchers, engineers, safety teams, product teams | The model appears as an autonomous artifact |

| Deployment | Customer support, sales, implementation, compliance, monitoring | Adoption appears as software rollout |

| Workplace use | Workers adapting tasks around the tool | Human repair work appears as AI productivity |

Once you see the labour stack, two claims become easier to hold together. First, AI can genuinely automate and augment tasks. Second, AI is not independent of human labour; it reorganizes and absorbs human labour into technical systems.

Hide overviewShow overview

The more politically sensitive issue is surplus. If an AI system allows a team of ten to produce what previously required twenty, what happens? The firm may reduce headcount. It may keep headcount and produce more. It may lower prices. It may raise wages. It may increase executive compensation. It may return capital to shareholders. It may do some combination.

Nothing in the model itself determines the answer.

This is why the phrase "AI will make workers more productive" is ambiguous. Productive for whom? If a worker produces more but receives the same wage, the gain accrues elsewhere. If AI encodes expert knowledge into a tool that lowers the value of expert labour, productivity can rise while bargaining power falls. If junior workers use AI to move faster but lose the apprenticeship path by which they used to become senior workers, the labour market may look efficient while hollowing out its own skill pipeline.

Marx's lens is not complete. The labour theory of value is contested. Not every profit is theft. Not every automation is exploitation. Many workers want better tools, and many AI systems will remove miserable tasks that nobody should romanticize.

Still, Marx catches what the productivity story hides:

The important economic question is not only whether AI creates value. It is who controls the conditions under which that value is created and distributed.

If you are building an AI product for workplaces, this should matter commercially too. Products that obviously transfer all benefit upward while making workers more monitored will face resistance, regulatory risk, brand risk, and adoption problems. Worker-hostile automation is not just morally thin. It is often bad product strategy.

Situation: Beauvoir and the Unequal Burden of Adaptation

Beauvoir keeps the essay honest about "the worker."

There is no generic worker. There are workers in situations. A junior analyst with savings and a STEM degree is not situated like a single parent in administrative work. A senior engineer whose productivity rises with AI is not situated like a translator whose rate collapses because clients now expect machine-assisted discounts. A doctor using AI as a second reader is not situated like a call-center employee whose tone is scored every minute.

This matters because AI transition advice often hides behind universal verbs: adapt, reskill, augment, learn, pivot. Those verbs are cheap when spoken from above. Whether adaptation is realistic depends on money, time, health, childcare, location, immigration status, credentials, local labour demand, and whether the new role preserves dignity.

Beauvoir's analysis of oppression is useful because it treats freedom as embodied and situated. Economic independence matters, but it is not the whole story. Social norms, bodily autonomy, public policy, private life, and material dependence all shape what a person can actually do. For AI, that means we cannot assess the transition only by counting exposed tasks. We have to ask how exposure lands in lived lives.

This is where gender matters without becoming a slogan. The ILO has emphasized that generative AI exposure is shaped by the occupational distribution of clerical and administrative work, which is often gendered.7 A policy that says "AI will mostly augment jobs, not replace them" may be true in aggregate and still miss the fact that certain workers experience the transition as lost hours, degraded status, reduced promotion paths, or an expectation to do more with less.

Beauvoir also clarifies a subtler failure: augmentation can be domination.

A worker is not freer merely because the tool makes them faster. They are freer if the tool expands meaningful control over their work, improves their material options, and preserves their ability to refuse, challenge, learn, and advance. A nurse whose AI system reduces paperwork may gain freedom. A teacher whose AI system turns lesson planning into compliance with a district dashboard may lose it. A designer whose AI system expands creative range may gain freedom. A designer whose employer uses the same tool to devalue the role may lose it.

The same technology can expand freedom in one situation and narrow it in another. That is Beauvoir's contribution to the AI economy: there is no moral assessment without the concrete situation.

A Worked Case: Call-Center AI

The four lenses become useful when they are run through one concrete domain rather than recited as a list. The richest published case is generative-AI assistance for customer support agents.

Brynjolfsson, Li, and Raymond (2023) studied the staggered rollout of an LLM-based assistant to more than 5,000 agents at a large US software firm. Headline finding: a 14 percent average increase in issues resolved per hour, with the largest gains concentrated among novice and lower-skilled workers. Experienced top-quartile agents showed essentially no productivity change. Customer sentiment in chat transcripts also improved on average, and agent attrition fell.2

That single result is enough to run the four lenses cleanly.

Rawls. The headline finding looks Rawlsian on its face: gains accrue most to the lowest-skill workers, which is the direction the difference principle prefers. But Rawls's actual question is structural, not local. Where does the productivity surplus go? The paper measures issues per hour and customer ratings; it does not measure how the firm split the gains between agents (raises, retained jobs), shareholders (margin), customers (lower prices, better service), or the AI vendor (license fees). Without the distribution table, "novice agents do better" is a fairness claim about the model and a silence about the institution.

Foucault. Call-center work was already heavily measured before LLMs arrived: average handle time, call recording, post-call survey scores, schedule adherence. The AI assistant adds two new categories of measurement. First, it sees every keystroke and recommendation, so the assistant's logs are themselves a worker-monitoring record. Second, "agent uses suggestion X percent of the time" becomes a tractable metric, and once tractable, it tends to become managerial. The assistant turns the worker into a more documented case. Whether that documentation lifts low performers (training signal, fairer review) or pressures everyone to follow the model (deskilling, suppressed dissent) depends on who can read the logs and contest them.

Marx. The labour stack inside this one product is large: the contact-center workers themselves, the engineers building the assistant, the data labelers who annotated training examples, the platform vendor selling the seat license, and the cloud provider charging per inference. The 14 percent productivity gain was not generated by the model alone. It emerged from agents repairing the model's wrong recommendations, learning when to override it, and absorbing the model's mistakes into customer-facing language. That repair work shows up in the productivity number as if the model produced it. Whether the agents see any of the surplus depends on contracts, seat-license pricing, headcount decisions made before the data appears, and bargaining strength outside the model.

Beauvoir. Two agents at the same call-center see the same tool from different situations. A part-time agent with caregiving responsibilities, no degree, and a non-portable visa has more to lose if the firm uses the productivity gain to consolidate shifts. A college-student agent has more to gain if the new productivity opens room for promotion or transfer. The aggregate gain is real. It does not land equally. Beauvoir's point is not that the average is wrong, but that the average obscures whose options narrowed.

| Lens | What the headline number does not tell you about the call-center case |

|---|---|

| Rawls | Where the surplus went: agent wages, firm margin, customer prices, vendor fees, public revenue |

| Foucault | Whether agents can inspect, contest, or refuse the assistant's logs as performance evidence |

| Marx | Whether the productivity reflects worker repair labour absorbed as "AI output" |

| Beauvoir | Whose situation lets them turn the productivity gain into mobility versus whose situation makes it a tightening of shifts |

The four lenses do not produce a single verdict. Two of them (Rawls, Beauvoir) push toward "wait, look at distribution and situation." Two of them (Foucault, Marx) push toward "the productivity number under-describes what changed." That is the right way to use the lenses: as four different audits run on the same firm-level claim, not as one consensus diagnosis. The case study is the simplest place to see that the lenses argue with each other rather than line up.

A second exercise the reader can run: take the same four columns and apply them to résumé screening, warehouse worker monitoring, or AI coding assistants. The bindings change. In résumé screening, Rawls dominates because the system rations opportunity. In warehouse monitoring, Foucault dominates because measurement is the product. In coding assistants, Marx dominates because the question of who captures the productivity gain over a 5-year horizon is genuinely open and depends on bargaining outside the model.

Seven Questions for Builders

Most AI builders will not rewrite labour law. They will ship systems. That makes the following design discipline practical, not decorative.

Before shipping a system that affects work, hiring, education, credit, insurance, public services, or worker management, answer seven questions.

| Question | Why it matters |

|---|---|

| What decision or action does the system influence? | Vague "assistance" often hides real authority. |

| Who is measured, and what proxies are used? | Foucault's problem starts at measurement. |

| Who can inspect and contest the result? | No appeal means no meaningful accountability. |

| Who receives the productivity gain? | Marx's question does not disappear because the UI is friendly. |

| Does the system expand or narrow worker discretion? | Augmentation without agency can still be control. |

| Which groups face the highest downside if the system fails? | Beauvoir's situation lens blocks abstract averages. |

| Is this a high-risk domain under relevant law? | Employment, worker management, education, essential services, and credit are not casual app categories. |

The worst product move is to ship a black-box workplace system, call it "AI productivity," and treat ethics as a fairness report after the fact. That is how you create adoption backlash, regulatory exposure, and real harm.

The better product move is to design for contestability from day one. Workers and affected users should know when AI is materially involved, what kind of inference is being made, where uncertainty is high, how to appeal, what data is retained, and which decisions remain human. Managers should not be given fake precision. If a score is a weak proxy, make it look weak. If a recommendation should not be used for termination, say so in the product and enforce it in permissions.

For founders, the ROI point is simple: trust is infrastructure. If your product changes livelihoods, you are not just selling automation. You are selling a governance layer. Build it like one.

A Five-Part Policy Bundle

The policy question is not "ban AI" versus "let innovation rip." That framing is unserious. The policy question is how to channel AI's productive capacity into institutions that people can accept as fair, contestable, and compatible with decent work.

A serious policy bundle has at least five parts.

First, high-risk decision systems need auditability and recourse. This is especially obvious in employment, education, credit, insurance, essential services, and public benefits. Affected people need more than a vague explanation. They need a way to challenge errors and a human process with authority.

Second, worker-management systems need boundaries. Not every measurable behaviour should be measured. Not every inference should be allowed. Emotion recognition, constant productivity surveillance, opaque risk scoring, and personality-based task allocation should face a high bar, especially when livelihoods are at stake.

Third, productivity gains need labour-market institutions. Wage insurance, portable benefits, sectoral training, apprenticeship pipelines, public procurement standards, and collective bargaining can determine whether AI gains become broad welfare or narrow rents. Without such institutions, "reskilling" becomes a polite way to shift risk onto workers.

Fourth, competition and infrastructure matter. If frontier AI requires enormous compute, data, talent, and distribution, then the AI economy can concentrate quickly. Antitrust, interoperability, public digital infrastructure, and procurement policy are not side issues. They shape who can build, who can bargain, and who can exit.

Fifth, exposure policy must be situated. Workers in highly exposed clerical, administrative, creative, and junior professional roles do not all need the same response. Some need better tools. Some need transition support. Some need protection from surveillance. Some need bargaining rights. Some need credentials and time. Some need childcare before they can "reskill." A policy that ignores situation is not neutral. It is lazy.

Where the Analogies Break

Hide overviewShow overview

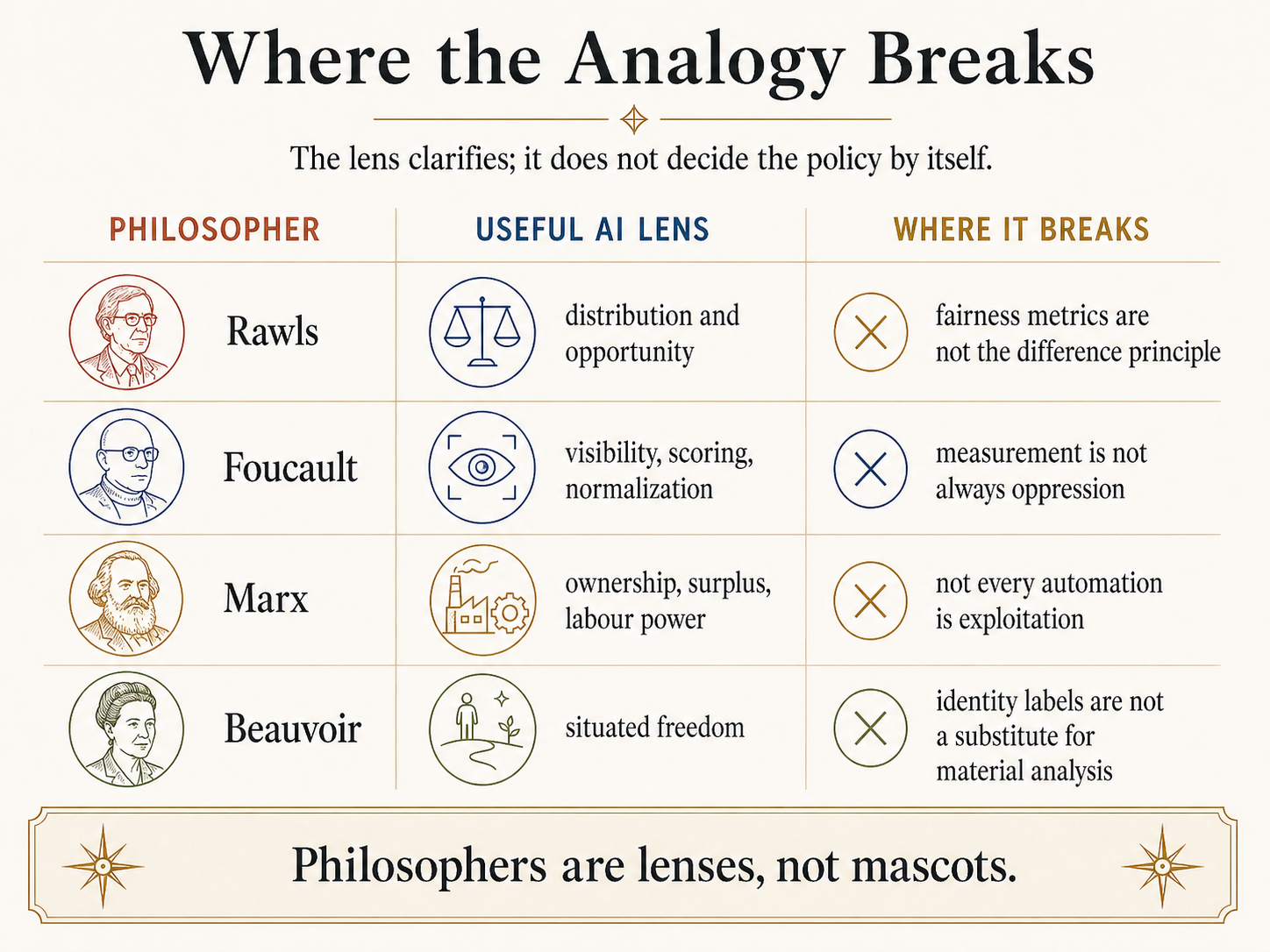

The lens discipline matters most in this essay because political philosophy is easy to abuse.

Rawls is not an algorithmic fairness researcher. His theory is about the basic structure of society, not a recipe for choosing between demographic parity, equalized odds, calibration, or counterfactual fairness. Using Rawls well means resisting the reduction of justice to local model metrics. Using him badly means pretending the difference principle directly outputs a compliance checklist.

Foucault is not a slogan that says all measurement is evil. He does not give a simple anti-AI veto. His work shows how knowledge, documentation, normalization, and power interlock. Some measurement is necessary for accountability. Some visibility protects vulnerable people. The question is not whether data exists. The question is what regime of power the data enables.

Marx is not a complete labour-market forecast. AI may create new tasks, new firms, new worker autonomy, and real productivity benefits. The Marxian lens is strongest when analyzing ownership, surplus, deskilling, and class power. It is weaker if used as a one-size-fits-all claim that every AI deployment is exploitation or that automation must collapse capitalism on schedule.

Beauvoir is not a decorative diversity citation. Her point is not merely that women should be mentioned. It is that freedom is situated. The same AI transition lands differently depending on gender, class, care obligations, credentials, geography, and social norms. The analogy breaks if "situation" becomes a vague synonym for identity categories without analyzing actual material constraints.

Finally, philosophy is not a substitute for empirical labour economics. Exposure estimates, productivity studies, wage data, adoption patterns, and firm-level evidence matter. The philosophical lenses clarify what to look for. They do not give permission to ignore evidence.

The Series Synthesis

The five essays now form a single arc.

The first essay asked how AI systems can know beyond their training data. Locke, Hume, and Kant separated content, induction, and structure.

The second and third essays asked whether systems trained on representations can escape the cave of uncorrected representation, and whether interactive experience provides escape or merely a better cave. Plato separated representation, source, and correction; the Era of Experience essay tested the modern empiricist response.

This fourth and fifth essay pair asks what happens when those systems enter the economy. Rawls, Foucault, Marx, and Beauvoir separate fairness, power, labour, and situated freedom.

Together, the series says this:

The central question is not whether AI is intelligent, experiential, or productive in the abstract. The central question is what kind of world our institutions build around it.

That is the right final note. Not anti-AI. Not techno-utopian. Not safety-washing. Just structurally serious.

FAQ

Is this essay anti-AI?

No. It is anti-vibes. AI can improve productivity, reduce drudgery, expand access to expertise, and help workers. But none of that automatically settles distribution, surveillance, labour power, or situated freedom. A serious pro-AI position should care about those things because they determine whether people accept the technology as legitimate.

Are fairness metrics useless?

No. They are useful within their scope. If a model affects hiring, credit, education, insurance, or public services, group-level error rates and outcome disparities can matter a lot. The mistake is treating them as the whole of justice. Fairness metrics audit a decision layer. Rawlsian justice asks about the institutional structure around that layer.

Is workplace surveillance always wrong?

No. Some measurement protects workers, improves safety, documents abuse, or prevents arbitrary management. The Foucault point is that measurement is never innocent just because it is accurate. The relevant questions are purpose, proportionality, access, appeal, retention, inference limits, and whether the system increases worker power or only management power.

Does "augmentation" mean workers are safe?

No. Augmentation can be good, but the word hides too much. A tool can augment a worker while also increasing pace, narrowing discretion, capturing expertise, reducing training pathways, and lowering bargaining power. Ask whether the worker gains agency, income, skill, mobility, and recourse. If not, "augmentation" may just be a nicer word for controlled dependency.

Why include Beauvoir?

Because "the worker" is not abstract. AI exposure lands on people with unequal time, money, credentials, care obligations, bodily constraints, social expectations, and exit options. Beauvoir's situation lens catches what aggregate productivity and exposure models miss: the difference between formal opportunity and lived freedom.

What should a builder take from this?

Do not ship livelihood-affecting systems with only a model card and a fairness chart. Build decision boundaries, recourse, uncertainty display, audit logs, role-based access, data-retention limits, and explicit rules for forbidden uses. If the product changes worker power, treat governance as part of the product, not as legal garnish.

Sources

Primary philosophical texts:

- Rawls, John. A Theory of Justice. Harvard University Press, 1971; revised edition 1999.

- Rawls, John. Justice as Fairness: A Restatement. Harvard University Press, 2001.

- Foucault, Michel. Discipline and Punish: The Birth of the Prison. 1975; English translation 1977.

- Foucault, Michel. The History of Sexuality, Volume 1. 1976; English translation 1978.

- Foucault, Michel. The Birth of Biopolitics: Lectures at the Collège de France, 1978-1979. English translation 2008.

- Marx, Karl. Capital, Volume I. 1867.

- Marx, Karl. Grundrisse. Manuscripts of 1857-1858; especially the "Fragment on Machines."

- Beauvoir, Simone de. The Second Sex. 1949.

- Beauvoir, Simone de. The Ethics of Ambiguity. 1947.

Modern AI, labour, and governance sources:

- Cazzaniga, Mauro, et al. "Gen-AI: Artificial Intelligence and the Future of Work." IMF Staff Discussion Note, 2024. https://www.imf.org/en/publications/staff-discussion-notes/issues/2024/01/14/gen-ai-artificial-intelligence-and-the-future-of-work-542379

- Gmyrek, Paweł, Berg, Janine, and Bescond, David. "Generative AI and Jobs: A Refined Global Index of Occupational Exposure." ILO Working Paper, 2025. https://www.ilo.org/publications/generative-ai-and-jobs-refined-global-index-occupational-exposure

- Stanford Institute for Human-Centered Artificial Intelligence. "2026 AI Index Report: Economy." 2026. https://hai.stanford.edu/ai-index/2026-ai-index-report/economy

- European Union. "Artificial Intelligence Act, Regulation (EU) 2024/1689." Annex III, 2024. https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX%3A32024R1689

- NIST. Artificial Intelligence Risk Management Framework (AI RMF 1.0). NIST AI 100-1, 2023. https://www.nist.gov/itl/ai-risk-management-framework

- Eloundou, Tyna, Manning, Sam, Mishkin, Pamela, and Rock, Daniel. "GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models." arXiv:2303.10130, 2023.

- Brynjolfsson, Erik, Li, Danielle, and Raymond, Lindsey R. "Generative AI at Work." NBER Working Paper 31161, 2023; Quarterly Journal of Economics. https://www.nber.org/papers/w31161

- Acemoglu, Daron. "The Simple Macroeconomics of AI." 2024. https://economics.mit.edu/sites/default/files/2024-04/The%20Simple%20Macroeconomics%20of%20AI.pdf

- Barocas, Solon, Hardt, Moritz, and Narayanan, Arvind. Fairness and Machine Learning: Limitations and Opportunities. 2023. https://fairmlbook.org/

Standard scholarly references:

- "John Rawls." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/rawls/

- "Original Position: The Argument for the Difference Principle." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/original-position/difference-principle.html

- "Michel Foucault." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/foucault/

- "Karl Marx." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/marx/

- "Simone de Beauvoir." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/beauvoir/

- "Exploitation." Stanford Encyclopedia of Philosophy. https://plato.stanford.edu/entries/exploitation/

Internal Links

- PhilosophyPath:

- four-lenses-on-the-ai-economy for the first half of this argument.

- empiricism-induction-and-llm-limits; platos-cave-and-the-era-of-experience; platos-cave-and-the-era-of-experience for earlier essays in the series.

- rawls, foucault, marx, beauvoir (forthcoming philosopher landings).

- TheoremPath:

- algorithmic-fairness (forthcoming).

- calibration-and-uncertainty; proper-scoring-rules.

- PedagogyPath (forthcoming):

Footnotes

-

Brynjolfsson, Erik, Danielle Li, and Lindsey R. Raymond. "Generative AI at Work." Quarterly Journal of Economics 140, no. 2 (2025): 889-942. NBER Working Paper 31161, 2023. ↩

-

Brynjolfsson, Li, and Raymond, "Generative AI at Work," NBER Working Paper 31161, 2023; later published in Quarterly Journal of Economics. ↩ ↩2

-

ILO, "Generative AI and Jobs: A Refined Global Index of Occupational Exposure," 20 May 2025. ↩

-

IMF, "Gen-AI: Artificial Intelligence and the Future of Work," 2024. ↩

-

Acemoglu, Daron. "The Simple Macroeconomics of AI," 2024. ↩

-

EU AI Act, Regulation (EU) 2024/1689, Annex III. ↩

-

ILO Working Paper 96, "Generative AI and Jobs: A Global Analysis of Potential Effects on Job Quantity and Quality," 2023. ↩